AC-DC Converters

AI, robotics, and edge computing are driving unprecedented growth in data center energy demand. As rack densities climb past 100 kW and approach megawatt-class deployments, the industry faces a dual challenge: delivering exponentially more compute power while shrinking environmental impact.

Traditional 48V VDC power distribution, which transformed efficiency a decade ago, is reaching its physical limits. At today's power densities, low-voltage systems require massive conductors, generate significant resistive losses, and create thermal challenges that drive up both infrastructure costs and environmental impact.

The answer lies in ±400 VDC and 800 VDC architectures that fundamentally change how energy moves from the grid to the processor. This isn't an incremental improvement. It's a pathway to handling megawatt-scale loads with far less copper, reduced conversion losses, and lower cooling demand.

The goal isn't just more watts. It's cleaner, more efficient power delivery for a computing-intensive world.

A decade ago, the adoption of 48 VDC marked a milestone in data center efficiency. It cut resistive losses, standardized N+1 redundancy, and laid the groundwork for modern hyperscale infrastructure. Standards like OCP Open Rack V3*1 cemented 48 VDC as the baseline for open, efficient rack power.

But as AI workloads push rack power into the hundreds of kilowatts, the physics become challenging. At 48 VDC, current requirements grow exponentially with power. The result: heavier copper busbars, higher resistive losses, and thermal challenges that increase both capital and operating costs, not to mention environmental impact.

The current penalty at scale: At 48 VDC, feeding a 1 MW rack means nearly 20,000 amps—requiring massive copper infrastructure and generating significant waste heat. By contrast, 800 VDC slashes current draw by more than 95%, cutting copper mass from approximately 400lbs to 40lbs for the same load while boosting end-to-end efficiency into the 94–96% range.

*1 OCP Open Rack V3: An Open Compute Project specification defining mechanical and electrical standards for 48 VDC rack power distribution, designed to improve efficiency and interoperability in large-scale data centers.

| Metric | 48 VDC | ±400 VDC | 800 VDC |

|---|---|---|---|

| Max Practical Rack Power | <80 kW | 100–300 kW | >1 MW |

| Copper Mass (1 MW)* | ~400 lbs | ~80 lbs | ~40 lbs |

| End-to-End Efficiency** | ~90% | ~90–93% | ~94–96% |

| Conversion Stages | 3–4 | 2–3 | 2 |

* Order-of-magnitude illustration assuming similar runs and materials.

** Representative of well-designed systems at nominal load. Idle and transient conditions differ.

Higher voltage distribution reduces current for a given power level, offering a straightforward way to contain costs, losses, and material consumption. Every pound of copper saved reduces mining, refining, and transport impact. Every point of efficiency gained saves megawatt-hours and reduces carbon emissions over a facility's lifetime.

The next phase (±400 VDC and 800 VDC) extends the same efficiency philosophy that made 48 VDC successful, but at power densities that match the demands of modern AI infrastructure.

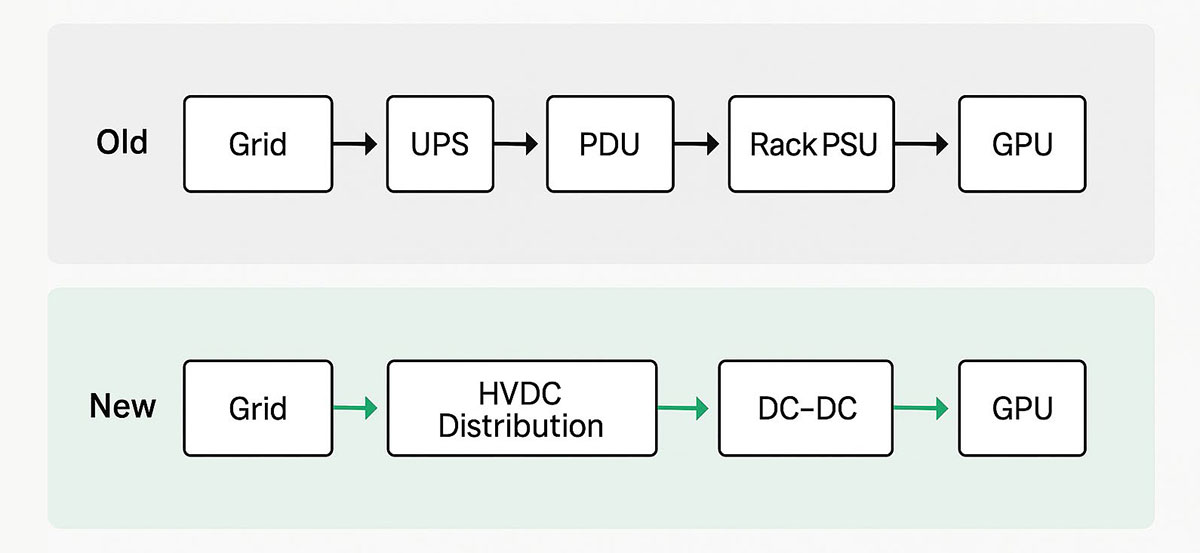

In legacy data centers, electricity passes through multiple conversion stages before reaching processors. Each conversion loses a few percentage points as heat, which cooling systems must then remove—multiplying total energy use and environmental impact.

HVDC architectures streamline that path. By transmitting 400 VDC or 800 VDC deep into the rack, operators minimize intermediate conversions and deliver power closer to the point of load with significantly higher efficiency.

Key architectural advantages:

When executed correctly, these changes can reduce copper requirements by roughly 30%, improve facility efficiency by several percentage points, and lessen cooling energy demand due to lower waste heat—though results depend on specific layouts and controls.

Power availability has become as critical as power efficiency. Data-center energy demand is rising sharply and clustering near urban centers and fiber hubs, testing the limits of local grid capacity.

According to the Pew Research Center*2, U.S. data centers consumed about 183 TWh in 2024—just over 4% of national electricity use—with projections reaching approximately 426 TWh by 2030. That growth equates to the annual consumption of tens of millions of homes.

Grid constraints are already impacting operations. In dense markets, utilities have limited new connections and curtailed loads during fault events. In mid-2024, a surge suppression failure in Northern Virginia triggered the emergency shutdown of 60 data centers*3. Within minutes, roughly 1,500 MW of load, enough to power over a million homes, was forced offline.

This context reframes efficiency as more than an operational metric—it's a resilience strategy. Forward-thinking data-center designs now prioritize:

HVDC addresses the efficiency component by cutting losses and improving capacity utilization. When paired with grid-aware controls and renewable integration, it becomes part of a comprehensive resilience strategy—using available energy more wisely while enabling cleaner future growth.

*2 Pew Research Center (2024): Independent research organization reporting on U.S. energy use and infrastructure trends. Their study on national data center electricity consumption provides insight into industry growth and grid capacity challenges.

*3 Reuters – "Power Issues Cause Massive Virginia Data Center Shutdown" (2024): Reuters news report detailing how a surge suppression failure at a utility substation in Northern Virginia caused 60 local data centers to shut down, temporarily removing approximately 1,500 MW of demand from the grid.

Transitioning to 400 VDC and 800 VDC introduces new design and operational responsibilities. Success requires treating safety, efficiency, and sustainability as integrated design requirements rather than afterthoughts.

Higher voltages demand strict adherence to IEC and UL standards for creepage, clearance, and isolation. Systems should incorporate:

Next-generation converters leverage new materials and topologies that simultaneously improve efficiency and reduce environmental footprint:

Sustainability in power design is increasingly measured through material efficiency and lifecycle longevity, not just energy savings:

Power systems must integrate seamlessly with existing infrastructure management:

Validation under AI-class transient profiles is critical before deployment to ensure systems can handle rapid load fluctuations without instability.

Because each data center has unique layout and load requirements, modular approaches offer maximum flexibility for scaling and serviceability. Instead of redesigning entire electrical backbones, operators can upgrade modules as efficiency and capacity improve.

Typical building blocks include:

When evaluating solutions, consider trade-offs among efficiency, thermal headroom, EMI performance, service access, and total cost of ownership over the system lifecycle.

The benefits of HVDC extend beyond electrical efficiency into measurable environmental improvements:

These aren't abstract sustainability goals—they're measurable design outcomes that lower both energy intensity and embodied impact over time.

Murata is developing and collaborating with leading compute, rack, and power-architecture innovators to accelerate this transition—ensuring tomorrow's data centers can scale responsibly with efficiency and sustainability built in from the start.

We're investing in technologies that make high-voltage distribution the default for AI-scale computing:

In the compute rack, ±400V is converted to 48V to supply the server input. This is where our industry leading, broad portfolio delivers clear differentiation.

Murata provides modular building blocks for HVDC-ready architectures, including front-end AC-DC modules, high-voltage DC-DC converters, point-of-load regulators, and integrated protection and monitoring.

The world will continue to compute more—training AI models, processing data, connecting billions of devices. That reality makes efficiency and resource stewardship more critical than ever.

Transitioning from 48 VDC to 400 VDC and 800 VDC is more than an upgrade. It's a foundational change in how data centers deliver, distribute, and manage power. While it calls for thoughtful validation and operational planning, the benefits are clear: higher density, lower losses, and stronger alignment with sustainability goals under increasingly constrained grid conditions.

This isn't about chasing green labels. It's about building smarter, leaner, and more responsible infrastructure where every watt does more.

Murata is focused on enabling that shift, delivering power technologies that support meaningful scale with significantly lower environmental impact.